Overview

About Advantech Container Catalog (ACC)

Advantech Container Catalog is a comprehensive collection of ready-to-use, containerized software packages designed to accelerate the development and deployment of Edge AI applications. By offering pre-integrated solutions optimized for embedded hardware, ACC simplifies the challenge of software-hardware compatibility, especially in GPU/NPU-accelerated environments.

| Feature / Benefit | Description |

|---|---|

| Accelerated Edge AI Development | Ready-to-use containerized solutions for faster prototyping and deployment |

| Hardware Compatible | Eliminates embedded hardware and software package incompatibility |

| GPU/NPU Access Ready | Supports passthrough for efficient hardware acceleration |

| Model Conversion & Optimization | Built-in AI model quantization and format conversion support |

| Optimized for CV & LLM Applications | Pre-optimized containers for computer vision and large language models |

Container Overview

DEEPX NPU CLIP VLM Solution container runs multimodal AI models like CLIP (Contrastive Language–Image Pretraining), on the DEEPX DX-M1 NPU. CLIP enables systems to understand the relationship between images and text, powering use cases like image captioning, visual search, and zero-shot classification.

DEEPX - Powering the Edge with Smarter AI Chips

DX-M1 is DEEPX's edge AI accelerator, built with proprietary NPU architecture to deliver powerful inference with low power draw.

Container Demo

Use Cases

- Smart Camera: Real-time edge AI analytics

- Edge & Storage Servers: Compact AI compute modules

- Autonomous Robotics: Embedded control and perception

- Industrial System: Factory automation and monitoring

Key Features

- Exceptional Power Efficiency: Up to 25 TOPS at only 3–5W

- Integrated DRAM: High-speed internal memory for smooth multi-model execution

- XPU Compatibility: Works with x86, ARM, and other mainstream CPUs

- Cost-Optimized Design: Minimal SRAM footprint ensures affordability without sacrificing performance

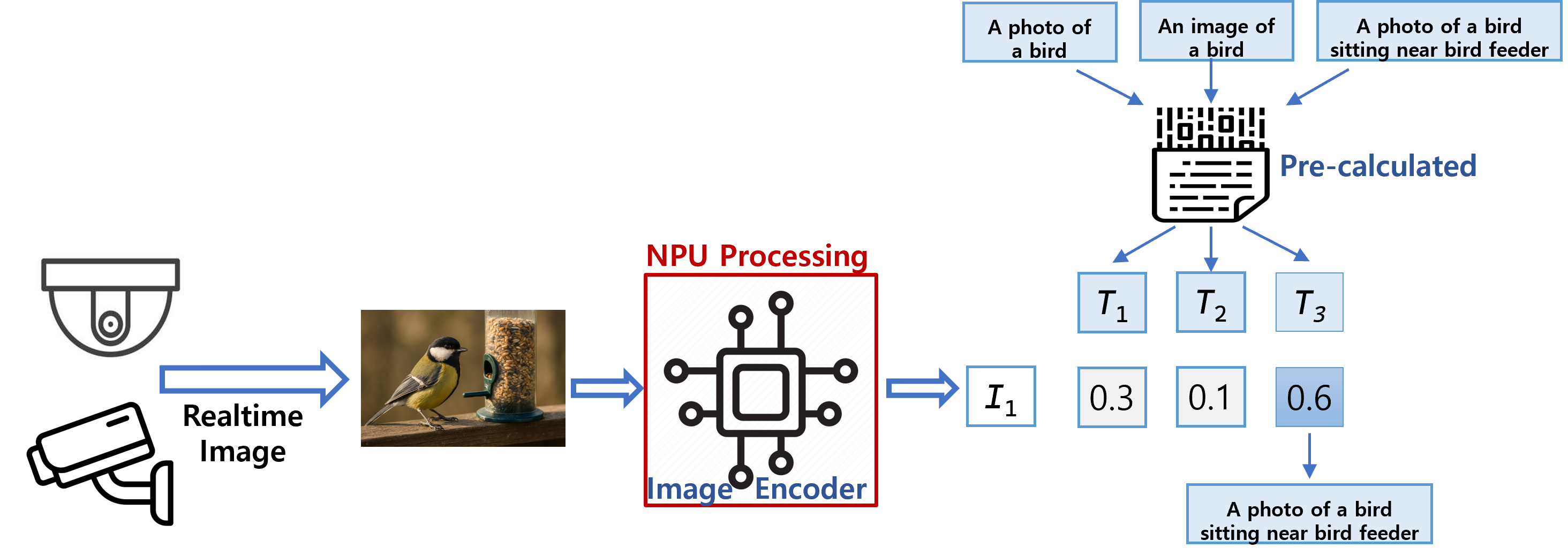

Image-to-Text Matching with CLIP

This figure illustrates a CLIP-based approach for generating textual descriptions from visual input. An image—such as a bird near a feeder—is encoded by the NPU, which runs the image encoder component of the CLIP model.

Meanwhile, a predefined list of candidate captions (e.g., “A photo of a bird”, “A photo of a bird sitting near a bird feeder”) is pre-encoded using the text encoder and stored.

When a real-time image is received (via wired or wireless transfer), the system compares its image embedding against the pre-calculated text embeddings. Each comparison produces a similarity score (e.g., T₁, T₂, T₃) between 0 and 1, indicating how closely each caption matches the image.

The system then applies a user-defined threshold to filter and return the most relevant sentence that best describes the visual content.

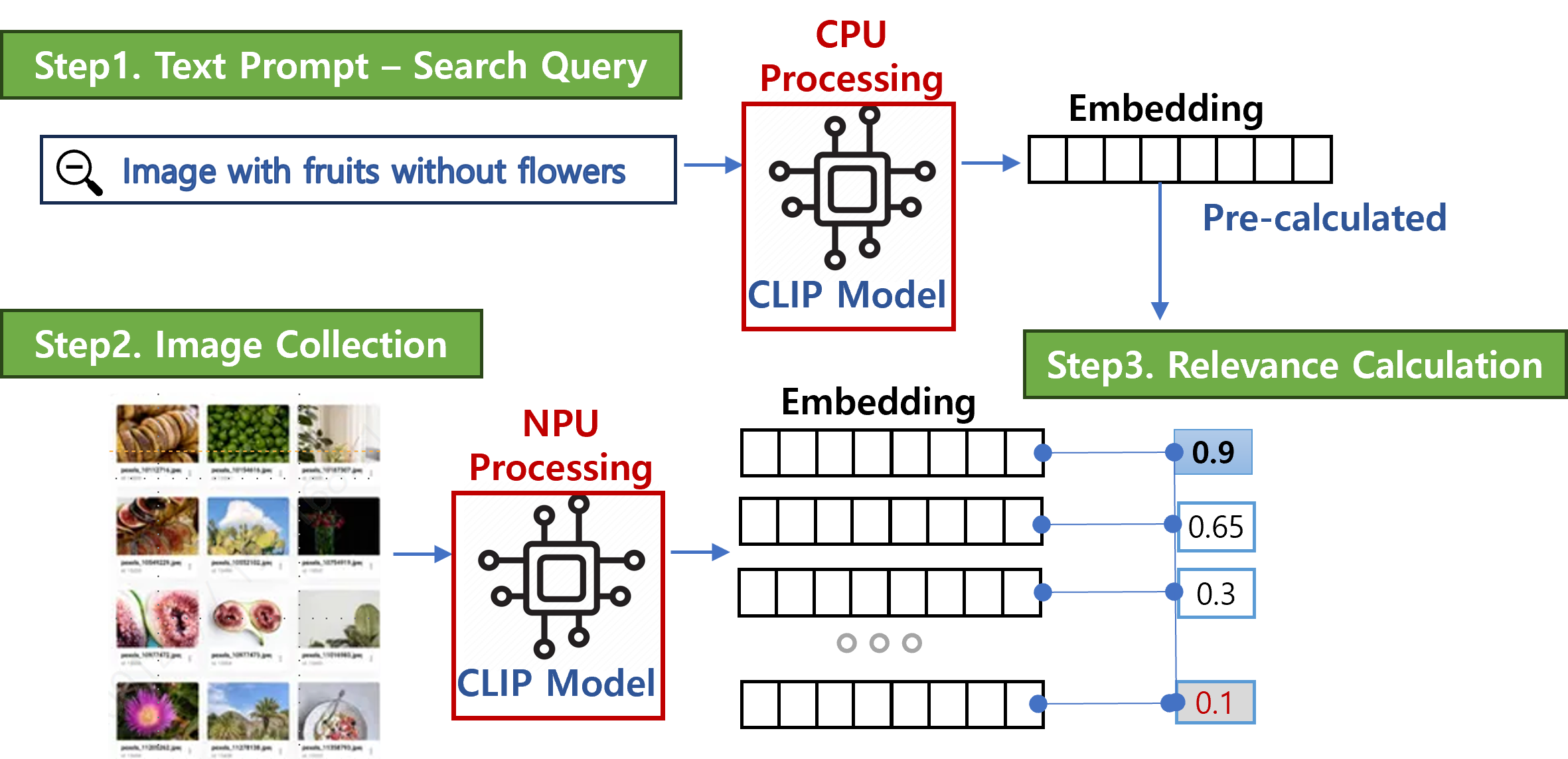

Text-to-Image Retrieval with CLIP

This figure illustrates a typical use case of CLIP for text-guided image retrieval. A user-provided query—such as “image with fruits without flowers”—is processed by the CPU, which runs the text encoder.

At the same time, a collection of candidate images is processed by the NPU, which executes the image encoder. Since CLIP is composed of two parallel encoders—one for text and one for images—this deployment distributes the workload across CPU and NPU accordingly: the CPU handles text, while the NPU accelerates image processing.

The system computes similar scores between the text embedding and each image embedding. The image with the highest score is selected and returned as the most relevant result.

Host Device Prerequisites

Before installing the program, ensure your host device meets the following hardware and software requirements to guarantee proper setup and operation.

| Item | Specification |

|---|---|

| Compatible Hardware | x86-based or aarch64-based Linux system |

| Python Version | 3.11 or later |

| Host OS | Ubuntu 20.04 / 22.04 / 24.04 |

| Required Software Packages | See the list below |

| Software Installation | Manual installation using a Python virtual environment (venv) |

⚠️ Note: This program requires Python 3.11 or later. Since this version is not included by default in Ubuntu 20.04 or 22.04, it is strongly recommended to use a Python virtual environment (

venv) to avoid conflicts with the system Python.

Required Software Packages on Host Device (Python venv)

The following Python packages must be installed within a Python 3.11 virtual environment.

Unless otherwise noted, these are CPU-only versions to ensure compatibility across most systems.

| Component | Version | Description |

|---|---|---|

| Python | 3.11.x | Core programming language |

| pip | 21.0 or later | Python package manager |

| torch | 2.0.0+cpu | Deep learning framework |

| torchvision | 0.15.1+cpu | Pre-trained models and image transformations for PyTorch |

| torchaudio | 2.0.1+cpu | Audio processing library for PyTorch |

| numpy | latest | Numerical computing library |

| pandas | latest | Data analysis and manipulation toolkit |

| tqdm | latest | Progress bar utility |

| regex | latest | Advanced regular expressions support |

| ftfy | latest | Unicode text cleaning and fixing |

| packaging | latest | Package version and metadata utilities |

| certifi | latest | SSL certificate bundle for secure connection |

| overrides | latest | Method override utilities for python classes |

| onnxruntime | 1.16.3 or later | Cross-platform machine learning inference engine |

| opencv-python-headless | latest | Computer vision library (headless version) |

| PyQt5 | latest | GUI framework (Qt bindings for Python) |

| QtPy | latest | Abstraction layer for multiple Qt bindings |

| QDarkStyle | latest | Dark theme stylesheet for PyQt applications |

| pyqt-toast-notification | latest | Toast notifications support for PyQt5 |

| debugpy | latest (optional) | Debugging support (VS Code-compatible) |

Container Quick Start Guide

For container quick start, including docker-compose file, and more, please refer to Advantech EdgeSync Container Repository

About DEEPX

DEEPX is a leading AI semiconductor company focused on developing ultra-efficient on-device AI solutions.

With proprietary NPUs (Neural Processing Units), DEEPX enables high performance, reduced power consumption, and cost efficiency across applications in smart cameras, autonomous systems, factories, smart cities, consumer electronics, and AI servers. DEEPX is moving rapidly from sample evaluation to mass production to support global deployment.

Partnership with Advantech

DEEPX NPUs are embedded into Advantech’s industrial PCs.

- Ready-to-deploy Edge AI with ultra-fast, real-time processing

- All-in-one AI platform supporting instant multi-model inference

- Scalable and cost-effective AIoT solutions for real-world deployment

Copyright © 2025 Advantech Corporation. All rights reserved.